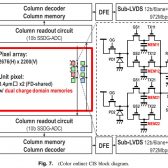

The Japanese Journal of Applied Physics has published a Canon Inc. paper on a global shutter entitled “A 3.4 μm pixel pitch global shutter CMOS image sensor with dual in-pixel charge domain memory” by Masahiro Kobayashi, Hiroshi Sekine, Takafumi Miki, Takashi Muto, Toshiki Tsuboi, Yusuke Onuki, Yasushi Matsuno, Hidekazu Takahashi, Takeshi Ichikawa, and Shunsuke Inoue.

From the paper:

In this paper, we describe a newly developed 3.4 μm pixel pitch global shutter CMOS image sensor (CIS) with dual in-pixel charge domain memories (CDMEMs) has about 5.3 M effective pixels and achieves 19 ke− full well capacity, 30 ke−/lxcenterdots sensitivity, 2.8 e- rms temporal noise, and −83 dB parasitic light sensitivity. In particular, we describe the sensor structure for improving the sensitivity and detail of the readout procedure. Furthermore, this image sensor realizes various readout with dual CDMEMs. For example, an alternate multiple-accumulation high dynamic range readout procedure achieves 60 fps operation and over 110 dB dynamic range in one-frame operation and is suitable in particular for moving object capturing. This front-side-illuminated CIS is fabricated in a 130 nm 1P4M with light shield CMOS process.

I don't speak or truly understand the language of physics, but Richard over at Canon News does and has a broken this paper down to make it a bit more understandable for us scientific mortals.

Based on 32MP APS-C, that works out to ~80MP full-frame. That's in the same ballpark as the 70MP rumored sensor.

This could completely solve the rolling-shutter problems that the Canon ILCs suffer from, and also improve silent shutter performance.

What they're talking about is for video. Majority of photogs shoots @ about 1-2fps, relative to motion pictures (30-60fps), w/ a really fast shutter speed (e.g. 1/3000s). Consider the two shutter types (rolling and global shutter), the image quality is critical as you increase the frame rate, but this is only mattered if you put the images together and turn it into motion pictures. Otherwise, this topic is useless for many of us photogs. I believe canon uses a hybrid technology right now, combining electronic and mechanical shutter curtain to capture image (e.g. canon 5D IV ... and EOS R!?!?)

Canon is behind on the video camera side while small company like BlackMagic have produced more proper camera for fast motion picture.

Canon already has a global shutter for video. It's in the C700.

This is a video only sensor, it's not going into a stills camera.

A fast electronic shutter means that you collect light only over a short period - e.g. 1/2000 second. With limited light you need high ISO or wide apertures. And it does not circumvent the problem that you cannot read out the whole (standard) CMOS chips fast enough for the required framerates as far I understand the necessity to read information line by line from top to bottom for the current frame before you start to read the next first line to build up the next frame.

With the new sensor layout you can maybe use 1/60 second @24 fps for exposure and 1/40 second for readout (just an example, 1/60 s + 1/40 s = 5/120 s = 1/24 s, the time for 1 frame at 24 fps). The benefit of the described design is that the charge buffers (CDMEM) store electrical charge proportional to the amount of light which hit the sensor during exposure + maybe a much faster readout/conversion speed. After exposure the information is "freezed" in the sensor (memory cells) and you can read out the "freezed still image" and convert it into storable "computer numbers" in a very short time before you clear the memory cells for the next exposure.

1/60 s is ~32 times longer compared to 1/2000 s which converts to 5 stops - 5 stops lower ISO or 5 stops narrower aperture which is always helpful if you already are at ISO 3200 with f/1.4 at 1/60s in low light :)

No it's not. As a strobist I consider global shutter to be the next big innovation I'm looking forward to. Flash sync at any shutter speed without workarounds like HSS or HS.

Actually I didn't. This paper is about the dual memory architecture of a global shutter sensor. a normal global shutter only has one memory cell per pixel.

For stills dual memory for the most part is entirely unnecessary and impractical. As with any motion, you'll have blurr. it simply can't be done on the sensor without some pretty convoluted brains sitting behind the sensor doing motion detection. while the time between shots is relatively fast, you still have the exposure itself. for instance, a 1/20th of a second exposure would still, in this case, require at least a 1/10th + 1/120th of a second dual exposure saved to the memory. For video, it's easy, they just take two fast exposures and combine them, as they have much more finite control over the shutter speed. it's not the case though with stills. this isn't the first time Canon has done interleaved exposures for Global Shutter. for instance, it's already in production on their C700 video camera. however all their patents and all their background work on this stuff is all to do with video, and will stay with video.

What Canon has to do with stills is far more expensive. It needs to go to a stacked artitecture, where the DR is not impacted by having memory cells at each pixel. Also Dual Pixel AF extremely complicates Global shutter even more. This type of arrangement would need FOUR memory cells for each actual pixel. Ugh.

that's not what this paper is about, nor is what this sensor is about.

it's an entirely different shutter process for stills than it is for video. but regardless, see what you want out of it. this technology will not make it into a stills camera for Canon.

what you are not considering is the TIME for each exposure - which is from 30s to 1/8000th of a second for stills. if you have a 1s exposure, you're probably effectively taking a .5s exposure and then a 2s exposure to make up the HDR image for a total of 2.5s.

while doing this while you are doing in video, your shutter speed can be finitely more controlled, because, your shutter speed, well is more controlled and far more predictable.

Then you have the complexity of DPAF which aggravates this by a factor of 2.

but really see into it what you want, but there's a reason canon already has global shutter implemented with the single cell memory version of this technology and it's not available on any ILC.

We had global shutters 20 years ago using CCD technology, and still have it. The goal is to achieve it with large CMOS sensors. Tiny CMOS sensors are available with a GS, but there are lots of issues whith a large sensor. The dual memory is a trick that can overcome the need for a super computer to read out 50 milliom photosites instantly.

They absolutely do expose their cutting edge ideas, when they patent them. Disclosure is part of the price for patent protection. I guarantee anything novel in this paper has already been patented. Companies' trade secrets are usually only things that are non-patentable, because there is no legal protection for a trade secret if it is disclosed in any way. Companies may defer patenting certain things until 1) they have an actual product in development, or 2) they want to publish some aspect of the design. But generally they patent quickly unless they worry the patent length wouldn't be long enough to cover the bulk of the product's market availability.

It's unlikely that Canon is doing anything to purposely confuse their competition. The competitors are more interested in what Canon has already announced or brought to market. Their competitors will reverse-engineer many aspects of Canon's designs 1) to see what Canon is doing, and 2) to see if Canon is infringing their patents. Canon does the same to their competitors. Companies may extrapolate certain performance numbers to have some expectation of certain future products, but I doubt they draw much from patents and journal papers. So much gets patented/published that's never brought to market.

Believe me, I work for a cell-phone manufacturer, and it is crazy what it's possible to learn about other chip-makers' designs just from poking them from the outside. Just one example, by running certain targeted benchmarks and putting the chip under thermal analysis, you can figure out what most of the chip die is dedicated to, and compare how much area they're spending on certain functions to what you are. Then you know what you're leading or trailing on in the design. (Actually, you can guess a lot of the design just by seeing the die photo. Certain things like caches stick out like a sore thumb.)

Also I don't get how this does HDR without changing shutter speed for the 2 exposures. Does it lower ISO on one of the exposures?