neuroanatomist said:Pi said:jrista said:I'm curious about the f/4.5 bit...how exactly does that work? Is that only for the outer points? (I believe the center AF point is still f/2.8 compatible like with most Canon AF systems.)

It works at f/2.8, of course, but that is equivalent to f/4.5, even though some people do not want to hear about that. Assuming that it has the same precision: 1/3 of DOF or so, it is 1/3 (or whatever) of the f/4.5 eq. DOF. It is like shooting with FF at f/4.5, with 1/3 DOF precision. Well, that is 1/3 of the DOF at f/4.5 Even if f/4.5 is all you need as DOF, your precision is lower. Some empirical evidence on that can be found on the FoCal site.

Sorry, but that's incorrect. The precision of the AF points at a given aperture isn't specified in terms of DoF. Well, ok, maybe it is...but in that case, you keep using the letter F in the abbreviation, and I do not think it means what you think it means.

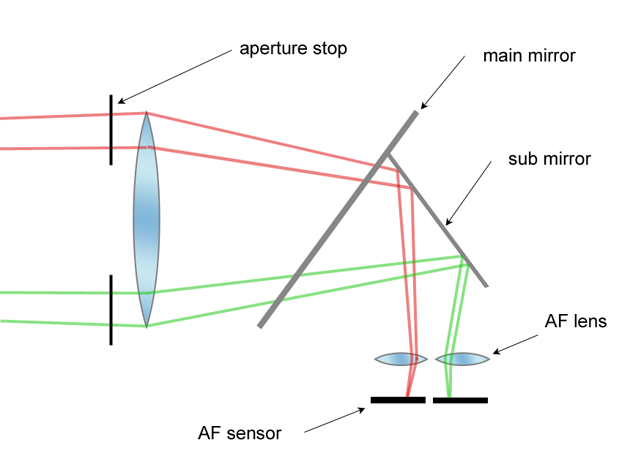

The AF sensor precision spec is 'within one depth of focus' for a standard precision point, and 'within 1/3 the depth of focus' for high precision (f/2.8, usually) points. Depth of focus is in 'image space' and is measured in micrometer distances at the AF (and/or image) sensor. It is related to, but distinct from, depth of field, which is measured in larger distances in 'object space'.

Depth of field is determined by aperture, subject distance, and focal length (and CoC, but since that is related to sensor size, let's leave that out). When we discuss 'shallower DoF on FF', that's a function of either subject distance (with APS-C you're further away for the same framing) or focal length (with APS-C, you need a shorter focal length for the same framing).

However, depth of focus is relatively insensitive to subject distance (once you're out of true macro range) and focal length. Thus, depth of focus is primarily determined by aperture, and that doesn't change with sensor size.

OTOH, aa stated, from a practical standpoint the APS-C sensor does have a deeper depth of field. So, even though the specified AF sensor precision is the same, the manufacturing tolerances for APS-C could, in theory, be looser. Users of 1-series bodies have long known their AF is' better' than consumer cameras. I wonder if part of the recent improvements in measured precision of AF with the 5DIII and 6D derive at least in part from Canon tightening up the manufacturing tolerances.

Thanks Neuro for a well written explanation about DOF, sensor size, focal length, distance to subject & background, AF focussing accuracy. etc, etc.

That's the way I have understood & work with these variables for some time in my photography. It's a shame many people who take photos and own cameras / lenses don't understand or apply these. People should practice, practice, practice - like I did years ago - taking photos with a FF at f/2.8 or a APS-C at f/1.8 - and determining how to use and control DOF for impact in photos.

That's the reason I'm waiting for a new 50mm f/1.4 - f/2 lens; that's the focal length and DOF that I enjoy taking many photos on my APS-C (Canon 7D).

I wonder if part of the recent improvements in measured precision of AF with the 5DIII and 6D derive at least in part from Canon tightening up the manufacturing tolerances.

And this, in red font, above is one of the things I'm very keen to see in a 7DmkII. I have worked very well with my 7D's AF (I have again practiced with many photos and different scenarios). I have been able to achieve photos with with my 7D that I'm very happy - including macro using AF (though I usually use MF for most of my macros), BIF, portrait, event photography, etc. There are a few scenarios that I would like the 7D's AF to be somewhat more accurate and consistent (like the 5DmkIII) - but the 7D is no slouch WHEN you know how to use it.

Regards all....

Paul

Upvote

0