Nope, I am not confusing kWh x(times) hours with kW/hour.

I will give you hint and see if that may ring the bell for you:

You are surely use rechargeable batteries in your flash.

With a smart charger you can set current to an arbitrary value. As you know. If you charge too fast you can cause batteries overheating. the higher the current the higher is the mW per unit of time intake by your batteries. In extreme situation you can cause a damage to your batteries.

Recommended charge correct is typically 1/10 of the batteries capacity. Yes, you can go 1/5, etc.

Now to further explain watts/unit of time relevance:

Some batteries sustain a high current fast discharge better than others.

Some batteries cause flash unit overheating as they run really hot. You know that of course.

They are not well suited for a rapid discharge. Not able to provide a sufficiently fast current required.

Even large gel AGM car batteries are affected by the same issue: a car AGM (gel) battery typically are good to be charged with battery chargers at around 10A. If charged with 20A charger, you are risking gel to be permanently damaged it. Gel goes literary bubbles and capacity of the battery will be greatly diminished.

Both examples clearly demonstrates that A/unit of time or W/unit of time are a relevant and make sense.

Anyway, you get the gist.

Here again I see your tendency to make an incorrect statement then perseverate on it and even compound it. Obviously, your bells are silent and you clearly don't 'get the gist'. "

The higher the current the higher is the mW per unit of time intake by your batteries." No. Current ≠ power (watts are units of power). Current is charge per unit of time, specifically coulombs (a unit of electric charge) per second. A higher current means more charge transferred per unit time. Please carefully read your statements and examples above – you state that on a smart charger you can set the

current to an arbitrary value, and too high a value can damage batteries. You state, "

Some batteries sustain a high current fast discharge better than others," which is true – a high current

means a fast discharge, more charge transferred per unit time. You state that car batteries should be charged at 10 A, and that using a 20 A charge can damage them – higher

current, more charge (too much) transferred per unit time. The term current already includes the time factor. You are perseverating on the claim that 'current per unit time' is relevant and makes sense, and that is just plain wrong...it is neither.

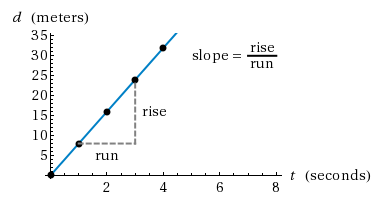

It's clear you are struggling with this concept, perhaps an analogy would help you. Charge is a time-independent value, measured in coulombs (C). Consider it analogous to distance, a time-independent value measured in meters (m). Velocity is a measure of change in position per unit time, the SI unit is m/s (velocity is really a vector, but we'll ignore the directional component for this analogy). Velocity is plotted as a linear function, and if you cover more distance per unit time, the slope of that line increases.

That is analogous to current, C/s. If you transfer more charge per unit time, that's a higher current (and as you point out, too high a current when charging batteries can damage them).

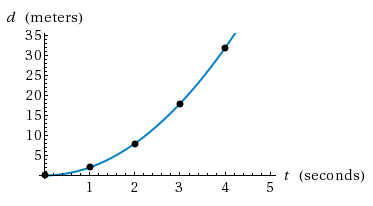

A change in velocity per unit time is acceleration, the units are m/s^2, and it's plotted as an exponential function:

Your concept of 'current per unit time' is flawed because it's like acceleration – a

change in the rate of charge transferred over time. Current per time per time. That would be like starting your smart charger at 1 A, then every second increasing it by 1 A...a minute later you'd be charging at 60 A and your batteries would all be melting down.

Hopefully the above examples and illustrations will enable you to understand these concepts which so far have eluded you. The take-away point is, the term 'current' itself already incorporates the 'per time' component – 'current per time' include the time component twice, and thus is an irrelevant and useless concept.