I wonder if the dynamic range might suffer though. Four times the resolution sounds nice, but that also means that the signal has to be amplified by four times and the noise with it. Of course noise gets lower if you average four pixels, but that does not work with a lower frequency noise. Also the R5 has some issues with colour shift to green or magenta when you want to recover shadows at high ISO. That does not happen with the R3. Is that just because the R3 has a stacked BSI sensor?

The upside of having four times as many pixels might be that demosaicing the RAW file might work better, as you have one red, one blue and two green pixels for every pixel of the low megapixel result. At the moment camera manufacturers cheat with the megapixel count, as they add all the coloured megapixels separately

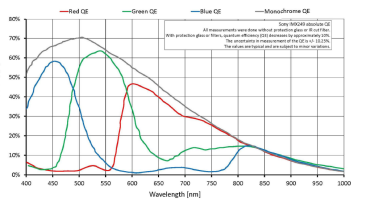

Except the filter on Bayer masks are not really "Red", "Green", and "Blue" if by RGB you mean the target RGB output colors of our emissive screens. "Red" isn't even remotely close, all of the cute little diagrams of RGB blocks that are supposed to be Bayer masks plastered all over the internet notwithstanding.

Actual color correct image of a partially removed Bayer mask.

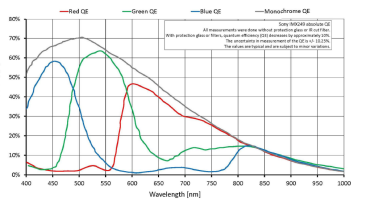

Typical sensitivity of a Bayer masked digital camera sensor.

Measured output of a fairly typical RGB monitor.

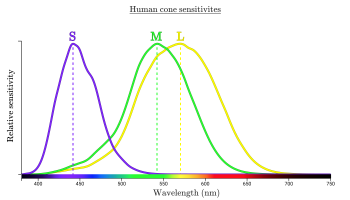

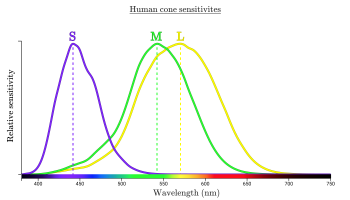

The whole idea that only the two "other" color values must be interpolated from each monochromatic luminance value output by each sensel is grossly misinformed. ALL of the color channel values of each pixel in an RGB (or CIE, or L*a*b, or CMYK) image are interpolated from the monochromatic luminance values in the raw data. Just as with our human retinas, all three filters allow some of all visible light through, they only allow more of the wavelengths near the peak transmissivity of each of the three filter types while they allow less of wavelengths further away from the peak of each filter.

Without this overlap between our short, medium, and long wavelength retinal cones our color vision would be impossible. Without the overlap between the "R", "G", and "B" filters over our camera sensors, getting color accurate images from digital cameras would also be impossible. Color is not intrinsic to any wavelength of light, or any other wavelength of electromagnetic radiation, for that matter. Color is a construct of the system that perceives it when certain wavelengths of EMR cause bio-chemical responses in our retinas that are then processed and assigned colors by our brains. In short, color is not a properly of what we call visible light, it is a property of the perception of a narrow portion of the overall EMR spectrum.