Were you not stopping down? I thought that was typical for landscape shooting?I have had landscaped photos ruined because of not realizing the amount of corner vignetting correction that was taking place.

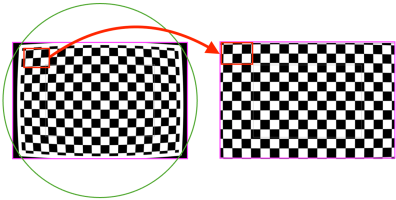

Slippery slope fallacy?That said, I hate the concept of digital correction because of not where it is today but rather than where it could go tomorrow. What is stopping them from making smaller lenses yet that have an APS-H image circle and then they stretch/scale it back to your full frame resolution.. should we care if ultimately the image is cleaner, sharper, etc.. I would on principle.. but if they took away the toggle to see the file without corrections we would probably never know.

Upvote

0