East Wind Photography said:

neuroanatomist said:

midluk said:

East Wind Photography said:

It will be stronger due to the increase in pixel density. Every incremental increase in resolution requires a bit stronger AA filter.

Isn't the AA filter supposed to make the image satisfy the Nyquist–Shannon sampling theorem?

From this I would deduce that the AA filter strength should be proportional to the pixel pitch and therefore higher resolution requires a weaker AA filter.

True – East Wind Photography is incorrect.

Please share some links so we can better understand. Higher pixel pitch should produce more moire on finer detail and therefore a higher degree of aa is required. However, I'm not afraid to stand corrected. Just trying to understand the reason for the opposite.

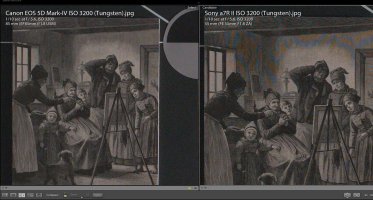

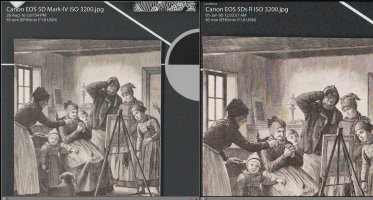

Incremental increases in sensor resolution have pretty consistently caused a loss of sharpness. Resolution does increase detail but the two are different.

I haven't run across anything that delves into clear detail about the 'strength' of an AA filter, but maybe it's confusion about semantics?

An AA filter essentially spreads the incoming light (introduces blur), and the amount of that spread is proportional to the pixel pitch. So, as pixel pitch gets smaller (more MP for the same size sensor), the amount of blur that the AA filter needs to introduce to counteract aliasing also gets smaller. I think convention would say that a filter that introduces

less blur is a weaker filter.

What an AA filter does is to add blur to prevent the aliasing (e.g. moiré) caused by repeating patterns in a subject where the periodicity is approximately half that of the pixel pitch or higher (Nyquist limit). Incidentally, that's why the AA filter is also called an optical low pass filter (OLPF) – it allows frequencies lower than the cutoff to pass, while blocking (blurring out, in this case) higher frequencies. For example, if a sensor's pixel pitch is 6 µm (the 5DIII is close), then patterns that repeat every 3 µm would be at the Nyquist frequency, patterns repeating every 4 µm would be lower than the Nyquist frequency, and patterns repeating every 2µm would be above it. It's the 'at or above' that the filter is designed to reduce/eliminate.

But it's a bit more complex than that, for two reasons. The first is that lenses aren't perfect. As pixel pitch decreases, eventually the blur introduced by the optics will reduce and potentially obviate the need for an AA filter. If you use crappy lenses, you won't have to complain about moiré.

The second (and for now, more important) reason is that manufacturers make choices regarding the strength of the AA filter – it's not simply 'set it equal to the Nyquist limit for the sensor' and be done.

There are plenty of examples of moiré in images from cameras with an OLPF, I know I've seen it in bird feathers and buildings with my 1D X. Also, moiré is more evident in video than in still photography – that's because it's not just the frequencies of the patterns, it's also the alignment of the patterns in the subject with the pixel array on the sensor. For example, if you take two shots of the same brick wall (same camera, lens, etc.) but move the camera 1 cm to the left, you may see moiré in one image but not the other. But if you're panning a video across that brick wall, you

will see the moiré at some point in the footage.

So, if you're a camera maker you need to decide – do you make the AA filter stronger (set the cutoff lower than the Nyquist frequency for the sensor). If you do that, you will reduce moiré in both stills and video, and if you make it strong enough, you can make pretty darn sure that none of your users see moiré. But as you make the AA filter stronger, you introduce more blur, and that means softer images. Granted, the softness introduced by an AA filter is very amenable to sharpening, but that can have undesirable consequences too (accentuates noise, but you can do NR, but that softens the image again, etc.). Or, you can make the filter weaker (less blur) – or eliminate it entirely – which means a sharper native image but a higher propensity to show aliasing.

There's also a third reason, concerning your statement that, "Incremental increases in sensor resolution have pretty consistently caused a loss of sharpness." That's partly down to technique and camera build. With a lower resolution sensor, a given amount of camera shake (from any source, including mirror/shutter vibration) or subject motion might fall above the Nyquist frequency of the sensor. So for the 5DIII's 6 µm pixel pitch, if the camera is vibrating at an amplitude of 2.5 µm, you would not see any shake-induced blur. But if you switch to a 5Ds with a 4 µm pixel pitch, now that same amount of shake is below the the Nyquist limit, and you'll see the effect.