Based on the "R" and "RP" naming convention, I'm wondering maybe even "RS" for the next body if it's resolution focused (a la 5DS). I was wondering if maybe the 5DS and 5DIV would converge as well - if the resolution gets high enough, could they just count blocks of 4 pixels as one pixel to reduce the resolution and increase output capacity. For instance, if you had a 100MP camera, could you change modes to treat blocks of 4 pixels as one in a "low resolution" or "speed" mode and jump down to 25MP and increase shooting speed/low light noise? I'm no engineer so I have no idea if that's feasible, but it sounds interesting to me.

The "s" for higher level sub-models in Canon's naming convention is always lower case:

1Ds Mark III

5Ds / 5Ds R

So it would be "Rs."

I think it is quite possible that the successor to the 5D Mark IV will be an R body, but I also think there is a small chance Canon could release a 5D Mark V (which would, by convention, be an EF mount camera).

I do think there will be both a "normal" resolution "5D" type of body (currently in the 30-35 MP range) and another model with basically the same body and features but a "high" resolution sensor (currently 50-?? MP).

In addition to the added expense of producing a 100MP sensor for users who would only output 25MP files, there would also be the added disadvantages of four times the data to be read out (with the accompanying longer time needed to read it, the additional power requirement, and additional heat), and processing load to bin 100MP to 25MP (and the accompanying extra power consumption and heat generated).

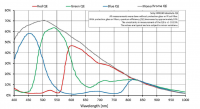

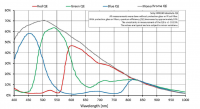

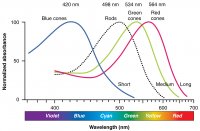

Pixel binning does not simplify color conversion they way most people think it does. Color filters in Bayer masks are not discreet. (Neither are the three types of cones in the human retina.) There's a lot of sensitivity overlap in response between the "Blue" (actually a violet shade of blue at about 460nm), "Green" (actually a slightly blue tinted green at about 540nm) and "Red" (actually a yellow-orange color with the highest transmissivity at around 600nm, not really what we call"red" at 640nm - all drawings on the internet that show "red", "green", and "blue" squares notwithstanding) filtered sensels.

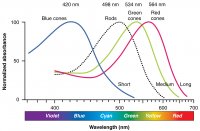

This "overlap" is actually how our eye-brain system creates "color." Some "red" (quite a bit, actually) and "blue" light make it past the "green" filters. Some "green" (quite a bit, actually) makes it past the "red" filters, and even some "red" and more "green" make it past the "blue" filters. The energy from all of those photons is measured the same by each sensel (a/k/a pixel well).

The way we demosaic the monochromatic luminance values collected by sensels behind the three different colored filters mimic the way our brains process the information gathered by our retinas. Even if we had two "green", a "blue", and a "red" filtered sensel for each pixel in our output image, we'd still have to demosaic and weight the color multipliers properly to get accurate color. It takes values from both the "green" sensels most sensitive at 540nm and the "red" sensels most sensitive at 600nm to interpolote the strength of "orange" light centered at 590-600nm. If there were no "overlap" in the response of sensels behind the three different filters, we would not be able to perceive what we call "color" at all! For more on this, google the "Luther-Ives condition" or "Maxwell-Ives criterion."

The overlap between our retinas' "green" and "red" cones is even closer - our "red" cones are most sensitive to light at 565nm, or a slightly green tint of yellow!

There's nothing intrinsically different in the portion of the electromagnetic spectrum we call visible light other than the fact our retina's respond chemically to those particular wavelengths and do not respond chemically to other wavelengths in the EM spectrum. Similarly, there's no "color" intrinsic in any particular wavelength of light. Non-human vision systems can perceive the same wavelengths differently, or even not perceive at all some wavelengths humans can see. Color is a construct of our eye-brain system. While it is true that some wavelengths of light will be perceived by humans as a certain color, it's also possible to created the same perception of that "color" by blending the proper amounts of other wavelengths of light. That's how our trichromatic color reproduction systems work. It is also the case that there are some "colors" that can not be created with a single wavelength of light. Magenta, for example, is how our brains perceive a combination of very "blue" and very "red" wavelengths that are near opposite ends of the visible spectrum. There is no single wavelength of light that can produce a perception of magenta in the human brain.