You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

SanDisk Sounds the Alarm About Near Future Storage Price Hikes & Supply

- Thread starter Canon Rumors

- Start date

I use AI daily for work. It has valid & valuable use cases. It's also still in its infancy and will continue to get better over time. Where that leads us (good or bad) is anyone's guess, but it's not going away.Does anybody actually think AI is worth it? I don't, for the most part. I'm sure there's some good that can come from it but it seems like a lot of bad stuff. That being said, I don't know all of the uses, so I'm curious of others opinions or knowledge on the matter.

Upvote

0

That is never going to happen.This AI bullshit has to be stopped. Governments have to step in and regulate this sector so the ordinary people can buy memory.

This also is far from the first time that shortages in tech have caused component prices to spike, and it won't be the last. The same thing as usual will happen where supply will ramp up and prices will eventually come back down. The earliest this is likely to happen is sometime in 2028, but it's difficult to predict, especially in today's very unstable world.

Upvote

0

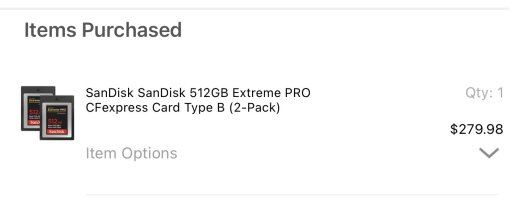

I ordered a couple of 1TB CFExpress cards from Amazon around February. The price rose 10% before they were delivered. Obviously I didn’t pay any extra but it was a close call. I too did it to feed an eventual R7 Mark II. If it doesn’t have the slot I can use them in my R5 Mark II, or sell them. Rumours suggest a CFE/SD mix, which seems logical.

Upvote

0

roby17269

R5, X2D II 100c, DJI Mavic 4 Pro & Mini 5 Pro

It also depends on which type of AI we are talking about.Does anybody actually think AI is worth it? I don't, for the most part. I'm sure there's some good that can come from it but it seems like a lot of bad stuff. That being said, I don't know all of the uses, so I'm curious of others opinions or knowledge on the matter.

The one that is behind most of what we're discussing here is Generative AI (a misnomer if you ask me, gen AI does not generate new content, it synthetizes from existing content) and a lot of economic benefits are touted as rationale for its current success, but the jury is still out on whether such benefits will justify the current mad rush.

Gen AI is good at refining content and at performing repetitive tasks, not so much at creating new content or taking decisions, especially if the content or the decision need an element of novelty.

Most human impacts nowadays are negative inasmuch companies are pushing Gen AI into coding and development activities to save on junior developers. This creates a problem for junior developers, but also for the future as eventually there will be less senior developers, whom are still needed... but it is unfashionable to discuss such unsavory topics

Upvote

0

This may be odd, but I feel bad for the kids that want to build gaming rigs. I remember spending every penny I made one summer doing my first one. Some of the PC builder groups are therapy sessions at this point.

If things are that tight, they should buy a used business machine and upgrade it.

That's what I did and still do.

Upvote

0

Time to switch to new Chinese brands. These compare making a killing at the expense of consumers. Time to boycott these greedy companies.

All for-profit corporations are "greedy".

That's what a profit is: excess.

Upvote

0

This creates a problem for junior developers, but also for the future as eventually there will be less senior developers, whom are still needed... but it is unfashionable to discuss such unsavory topics

They'll ride the old horses as long as they can and, eventually, drive the industry into the ground, just like in commercial aviation.

Upvote

0

This AI bullshit has to be stopped. Governments have to step in and regulate this sector so the ordinary people can buy memory.

"Planned" economies would function even worse.

Upvote

0

It depends on what the AI is being used for. All the hype is around artificial image creation and getting answers from the likes of Chat GPT. Neither of those are worth much, nor are likely to monetize well. I have a fairly small computer with an Intel B50 graphics card in it that I set up to do local AI. It will do most of the image generation that the big dogs do and Intel AI Playground is free for the loading, which really challenges the likelihood of huge data center monetization. Now the places where AI is useful. Nearly all modern cameras are using some form of AI to assist with autofocus and the relative improvement in AF over the last few years has been huge. If you do serious processing of photos, the Topaz suite (which uses AI extensively) is pretty much a must have, particularly if you shoot in difficult conditions at high ISO. The noise reduction and sharpening tools are unmatched. Even Adobe is using AI for noise reduction and object removal in Lightroom Classic. That is just in our little neck of the woods. You can question the value of the feature, but Tesla self-driving cars actually do work remarkably well. At the other end of the spectrum (and maybe the only place the data centers could see real revenue), AI driven warfare is currently being demonstrated and will only increase in capability in the future. That one is more than a little scary. In the end, I don't see any applications for AI that are going to pay for the enormous cap ex that is being thrown at data centers, not to mention the power bills. Virtually all the useful applications for AI to date are distributed functions that don't need data centers, except possibly once for training.

You're engaging in the exact conflation that billions of dollars of marketing for generative AI have intended you to make.

Generative AI and classical ML approaches with actual design intent are not analogous or appropriately lumped together.

Classical deep learning applications like noise reduction, subject recognition, etc. do work, and they are used to defend "AI" generally, meaning generative AI, which is slop that has extremely little value outside of generating spam.

It is a classic motte and bailey. "AI" is a completely worthless label that doesn't reflect any of the technologies allegedly included under it.

Upvote

0

Indeed. I use ML routinely for scientific image analysis, virtual chemical library screening, etc. Very much value added. But even the companies that provide the software/services have taken to calling it AI. Customers expect it, venture backers ask if companies are using ‘AI-based drug discovery’, and the misnomer perpetuates itself.You're engaging in the exact conflation that billions of dollars of marketing for generative AI have intended you to make.

Generative AI and classical ML approaches with actual design intent are not analogous or appropriately lumped together.

Upvote

0

Sorry if I didn't use the Generative vs ML labels, but I did point out that I have a pretty cheap machine that will do "generative AI" in my office and the consequence being that there is not likely a monetization option for data centers to do that kind of "work". Data centers built to do generative AI are really just an extension of the whole "cloud" mindset, which is all about control (marketed as convenience and safety). The number people compromised when a "cloud" facility gets hacked continues to get bigger. At some point, customers will figure out that safety is not really one of the features of the cloud. This is particularly true of generative AI, which is constantly integrating your every interface with it into its data base. At some point, your entire persona becomes public. Not a desirable outcome for most folks.You're engaging in the exact conflation that billions of dollars of marketing for generative AI have intended you to make.

Generative AI and classical ML approaches with actual design intent are not analogous or appropriately lumped together.

Classical deep learning applications like noise reduction, subject recognition, etc. do work, and they are used to defend "AI" generally, meaning generative AI, which is slop that has extremely little value outside of generating spam.

It is a classic motte and bailey. "AI" is a completely worthless label that doesn't reflect any of the technologies allegedly included under it.

Upvote

0

No worries, the label is just a nuisance (imo) and can easily get in the way of communication.Sorry if I didn't use the Generative vs ML labels, but I did point out that I have a pretty cheap machine that will do "generative AI" in my office and the consequence being that there is not likely a monetization option for data centers to do that kind of "work". Data centers built to do generative AI are really just an extension of the whole "cloud" mindset, which is all about control (marketed as convenience and safety). The number people compromised when a "cloud" facility gets hacked continues to get bigger. At some point, customers will figure out that safety is not really one of the features of the cloud. This is particularly true of generative AI, which is constantly integrating your every interface with it into its data base. At some point, your entire persona becomes public. Not a desirable outcome for most folks.

I wholly agree with what you've said there.

Indeed, generative "AI" is completely incapable of being secure if 3rd party models are run (which is what nearly everyone does). All commercial models run on continuous training, and all prompts are used as data. There are many "prompt injection" exploits which can never all be closed with these models.

Cloud-based infrastructure also has many problems with cost and security. In fact, just this week I tested out some new high-capacity local computers that were purchased after I identified our growing cloud costs and came up with this exact local build to offload that cost. With the AI bubble going as it is, we also needed to purchase the machines ASAP due to RAM price hyperinflation etc., as we're touching on in this thread.

Gen AI is good for spam and sometimes good for memes... that's about it. It is just a lossy compression file for the internet (a "blurry JPEG of the internet" is one way to put it).

Indeed. I use ML routinely for scientific image analysis, virtual chemical library screening, etc. Very much value added. But even the companies that provide the software/services have taken to calling it AI. Customers expect it, venture backers ask if companies are using ‘AI-based drug discovery’, and the misnomer perpetuates itself.

Yep, ML applications have tons of great uses. Neural networks are simply very efficient at high-dimensional input-based approximation of any output function. And pretty much any time someone tries to point to "AI" successes, they point to specifically researched and designed ML applications with very specific scope/intent... and use that to somehow try to imply that their generative "AI" (lossy copy of Wikipedia) will become conscious soon and start writing sonnets for leisure, so you better invest now.

It can code, they say! But have they tried simply using "git clone"? You can cut out the middle man that way.

Upvote

0

I'm not sure if you can post Instagram links here but this one relates directly to this thread.

Upvote

0

Well it's that, and spam. Half the internet is gen AI slop nowI'm not sure if you can post Instagram links here but this one relates directly to this thread.

Upvote

0

Sure, let's boycott Micron. Oh wait, they already decided to stop selling to consumers entirely. The entire point here is that the big memory makers do not need to sell to consumers right now because b2b demand is so insanely high.Time to switch to new Chinese brands. These compare making a killing at the expense of consumers. Time to boycott these greedy companies.

It would be great if this brought some new players into the market (Chinese or otherwise). The memory market has become too consolidated, too few players. More competition would be good for everyone in both the short and long term.

Upvote

0

I have not made any purchases of SSD or memory cards since 2024. Wow have prices increased. In Sept. 2024 I purchased several Sandisk 4TB SSD storage for $329.00 /ea. from Amazon. Today the same product sells for $726.00/ea. Luckily I purchased six of them and should be set for the foreseeable future. Same goes for memory cards I purchased several CFE type B v4 cards at 1TB or greater storage and am set for the foreseeable future as well.

Never thought I would see SSDs more than double in price.

Never thought I would see SSDs more than double in price.

Upvote

0

I have not made any purchases of SSD or memory cards since 2024. Wow have prices increased. In Sept. 2024 I purchased several Sandisk 4TB SSD storage for $329.00 /ea. from Amazon. Today the same product sells for $726.00/ea. Luckily I purchased six of them and should be set for the foreseeable future. Same goes for memory cards I purchased several CFE type B v4 cards at 1TB or greater storage and am set for the foreseeable future as well.

Never thought I would see SSDs more than double in price.

Take a look at RAM prices... probably 4-5x depending on what you have.

Upvote

0

My nephew built a gaming rig. He was really into it. He mowed lawns, walked dogs, and any other job he could to buy parts. He stopped recently when everything kept surging in price. He learned a lot about the economy, but he’s pretty heartbroken about it. His friends stopped too.This may be odd, but I feel bad for the kids that want to build gaming rigs. I remember spending every penny I made one summer doing my first one. Some of the PC builder groups are therapy sessions at this point.

Upvote

0

Similar threads

- Replies

- 37

- Views

- 16K

- Replies

- 245

- Views

- 135K