The transmission of a sheet of glass is 92%, and this is less than 100% due to 4% of light being reflected back from its front surface and another 4% from its rear. Multi-coat it and the transmission gets close to 100%. It is absolutely impossible for acrylic to have 20% more transmission than 92 or 99+%! And, it's basic optics that the transmission coefficient decreases as refractive index increases because reflection increases (Fresnel equation).

Technically, AlanF is correct. I should have said "an Acrylic Lens with embedded Adaptive Floating Optics and Computational Photography algorithms will be a 20% Brighter Lens" than using the term "Acrylic has 20% better transmissibility" . Those are two VERY DIFFERENT MEANINGS and AlanF's is the scientifically correct one. Technically, Acrylic has 92% transmission at 50 mm thickness so I would say it's still better than the typical glasses Canon uses.

FIRST THING IS FIRST: Please look at the links below to get a little educational series for y'all today before I discuss WHY our lens are 20% Brighter (i.e. have more light gathering ability at a given focal length)

In case anyone is wondering what AlanF is speaking of, I present to you the following Wikipedia Weblinks to show you a background of and to illustrate the concepts of refraction, diffraction, Fresnel Equations and Fresnel Lenses, general Interferometry, Fourier Transform and the famous old Bell Curve so you understand that we use ALL such concepts in designing and building high-end prime and zoom lenses!

Refraction:

en.wikipedia.org

Refractive Index:

en.wikipedia.org

Diffraction:

en.wikipedia.org

Fresnel Equations

en.wikipedia.org

Fresnel Lenses:

en.wikipedia.org

Interferometry:

en.wikipedia.org

Fourier Transform:

en.wikipedia.org

Normal Distribution (aka Bell Curve):

en.wikipedia.org

The Refractive Index of Typical Optical-grade Fluorite Glasses used in Canon's higher end glass would be 1.56 and Acrylic would be 1.49 which doesn't sound like a lot of difference between the two but it is. You actually want the LOWER NUMBER index so distortion is not as bad. Ideally, you want the same amount of distortion as through AIR (i.e. Index of Refraction at Sea Level at 18 Celcius is 1.0 in Air) The curvature of front and back portion of the lens elements is what gives you the "Distorted Look" of a typical lens. Ideally in modern imaging, you would want NO distortion at all.

The base reason though that Acrylic is so sought after for lenses is WEIGHT! It is much lighter than glass and with modern interferometry techniques and ray tracing, you can make a lens that is 20% BRIGHTER, SHORTER in LENGTH and LIGHTER in WEIGHT by 50% than the same lens done in Glass.

The Fresnel effect that AlanF speaks of distorts (Bends?!) the light path within the glass (or Acrylic) lens element itself and the optimum light path is technically UNKNOWN as it exits the back part of the lens giving rise to chromatic aberrations, fringing, scintillation and edge artifacts once it finally hits the image sensor itself. To compensate for this, you model your lens in an Optical-band ray tracing program which takes into account the lens material light absorption characteristics and refraction/diffraction properties and curvature of the lens element itself.

You then make a grid across the entire front and back of the lens elements and compare the CALCULATED path of incoming light rays to the 2D-XY grid of the sensor itself, which for expediency-sake, we use a SQUARE sensor aspect ratio at pixel resolutions such as 4096 by 4096, 8192 by 8192, 16384 by 16384 pixels that is within the lens mount circle area where a Medium Format, Full Frame, APS-C, Micro 4/3rds, 2/3rd sensor area would be optically placed and that matches the actual photosite size in microns we wish to model.

When you model your lens, the incoming light rays will have a highly specific light path that will end up falling on a specific photosite of the destination image sensor. You can use interpolation to model for in-between resolutions and larger/smaller sensor sizes. When the light ray at grid coordinate x:200, y:200 in from the upper-left corner of the front element of a lens is tracked, we want to ENSURE that through the ENTIRE light path, that the photon stream will fall on photosite grid location x:200, y:200 of the sensor and any deviation NEEDS to be compensated for via a set of lookup table based correction factors that are applied to luminance, chroma, saturation and actual coordinate position. The final CORRECTED pixel value will then represent an ideal pixel value of a light ray that has been optimally waveguided through the entire lens assembly.

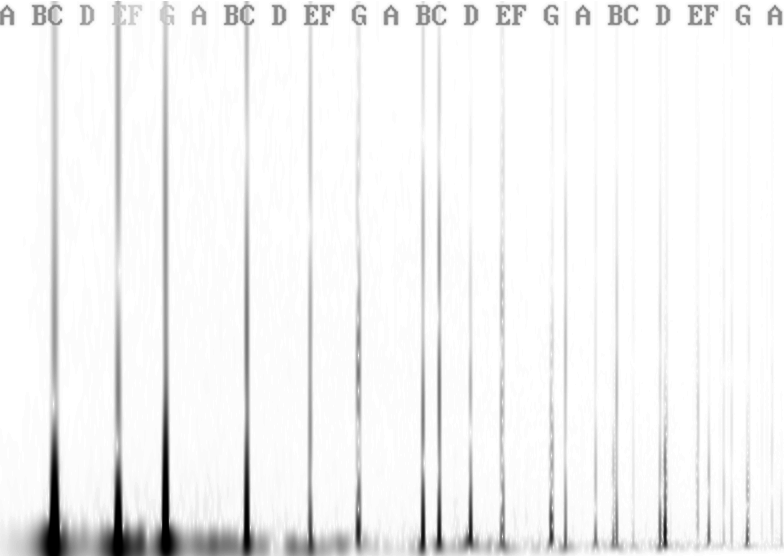

When we designed our lenses, we first modeled them and raytraced them in software. Then, in the real world, we shot monochromatic light (i.e. a laser) at various wave lengths corresponding to the Red, Green, Blue, Yellow, Violet, etc rainbow colours within the optical EM bands. The reduction in amplitude, the slight frequency shift on light wave exit from the back element and the POSITION and coning (diffraction) of the exiting light path(s) compared to the incoming grid position at the front lens element for each sensor resolution was turned into a lookup table which we embed into each lens so that any camera obtains and then applies a pixel 2D-XY positional correction factor, a luminance value correction factor and a chroma value (i.e. colour value) correction factor which is applied to the photosite located at a specific 2D-XY position on the sensor. Since there are microlenses and colour filters put on top of each photosite, we also take THAT into account and model WHAT an ideal pixel value would be based upon a given focal length, iris setting, focus value, neutral density filter value, and other correction factors which we also build into our lookup tables.

This means the light paths and pixel values modeled from monochromatic light path ray traces will result in a proper correction factor being applied to each pixel in a 2D-XY grid that will result in a super-sharp, perfectly Bokeh'ed and stable/non-distorted image no matter the focal length and destination image sensor size and pixel count! Since we use MULTIPLE lens elements in a single lens body, ALL individual lens elements are modeled together as a singular UNIT so all individual distortions can be accounted for in our final pixel value lookup tables for each image sensor resolution and sensor size.

The real-time adaptive optics are ALSO taken into account so we can ESTIMATE what the ideal pixel value SHOULD be once it filters through various atmospheric effects and distortions at distances from below one metre up to 100 km.

This means in the hottest desert or roadway environments with all that heat shimmer boiling up from the desert plain or hot pavement, you will get an UNDISTORTED CRYSTAL CLEAR IMAGE that accurately represents what is actually there in the far background!

P.S.

Please note that ALL ABOVE DESCRIPTIONS are now Fully Free and Open Source

under GPL-3 Licence Terms for BOTH Hardware and Software. ANYONE and EVERYONE

is fully free and able to modify, create, manufacture, sell/resell lenses for any type of

imaging system using our designs with NO ROYALTY PAYMENTS REQUIRED so long

you follow ALL the tenets of the GPL-3 Open Source Licence Terms!

V